Saasyan Unveils Image Object Detection AI Targeting Threats of Violence In K-12 Schools

Saasyan has officially launched Image Object Detection AI, a new AI model embedded within Assure that identifies and alerts when weapons are found in...

1 min read

Saasy

11:58 AM on May 6, 2022

Saasy

11:58 AM on May 6, 2022

We recently announced the official launch of Saasyan Safe Image AI.

Saasyan Safe Image AI is available in our cloud-based, online student safety solution, Saasyan Assure.

The Saasyan Safe Image AI function detects any images containing sexual content in a student's online drive, making the detection and prevention of image-based abuse, more commonly referred to as revenge porn, an attainable and manageable goal for educational professionals.

"Unfortunately, there is an extremely high prevalence of image-based abuse found in schools today. With the launch of Safe Image AI, Saasyan delivers a solution that enables schools to turn the tide on this concerning epidemic" says Sidney Minassian, CEO of Saasyan.

Saasyan Safe Image AI is currently available for online drives in Google Workspace and Microsoft 365.

Contact us to learn more.

Saasyan has officially launched Image Object Detection AI, a new AI model embedded within Assure that identifies and alerts when weapons are found in...

Safe Image AI Detects Images Containing Sexual Content In A Student's Online Drive Assure’s integration with Microsoft OneDrive helps schools using...

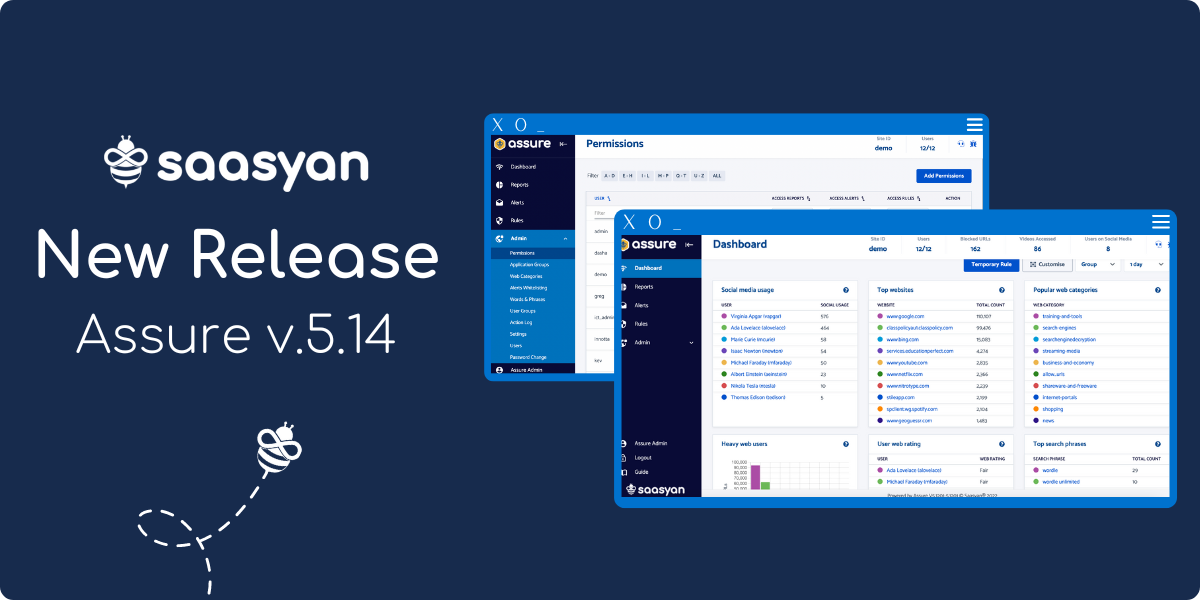

New Assure Features - November 2022 The year is ending on a high at Saasyan with an exciting Assure release! Read on to learn about some of the...